Approach to Master Data Management at Crossrail

Document

type: Micro-report

Author:

Pontus Osmar Palmgren BSc

Publication

Date: 14/03/2017

-

Abstract

When a new data warehouse was implemented to improve programme reporting, the inconsistent master data in the source systems became apparent. The master data in the source systems were aligned, a new master data system was made the ‘single source of the truth’ and all data was placed under change control. The result was improved data quality and reporting.

This paper will be of interest to any large programme looking to implement master data management to control master data within the organisation.

-

Read the full document

Introduction and Industry Context

It’s critical for any large and complex programme to accurately monitor and analyse its performance. The Programme Controls team at Crossrail does this using a range of metrics and measures which are reported to the management board and sponsors on a regular basis. These reports and dashboards are generated by Crossrail’s data warehouse on a regular basis. Crossrail’s data warehouse was developed by the in-house development team on SQL server and it’s owned and managed by the Programme Controls Reporting team.

The data warehouse is fed data from a range of source systems, such as finance, risk, planning, cost etc. It is all supported by the Work (WBS), Cost (CBS) and Organisation (OBS) Breakdown Structures. If the master data or breakdown structures in these source systems aren’t consistently aligned the data warehouse can’t produce the reports and dashboards required to effectively manage the project.

Crossrail’s approach to Master Data Management

Prior to the data warehouse being implemented, individual reports were generated in each of the source systems, before being collated to produce a programme-wide report. This was laborious, slow and traceability couldn’t be guaranteed. To address these issues a project was initiated to implement a data warehouse where data from all source systems could be imported and reports quickly generated. However it was soon realised that the master data and breakdown structures were not consistent across the source systems and an aggregated report could not be achieved in the data warehouse unless the master data was aligned.

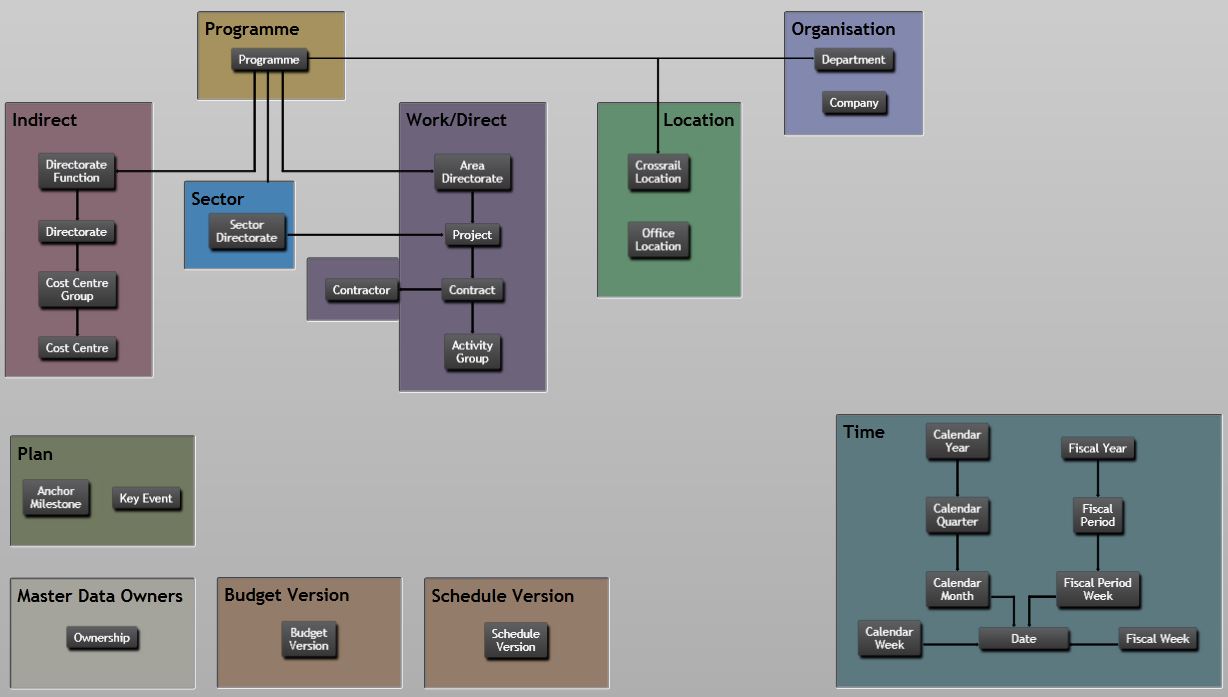

In order to ensure all master data was aligned, a new project was setup to define and agree the master data model. This model was the initial list of master data and breakdown structures. Since the Crossrail data warehouse was running on Microsoft SQL it was decided to use Microsoft SQL Server 2008 R2 Master Data Services for the management of master data. A custom front end user interface (fig.1) was also made available to the entire organisation for easy accessibility and understanding of the master data. The two most important sections are the Direct and the Indirect breakdowns of the Programme element. These sections are organised in hierarchies where each master data element in a list will relate to a master data element in the level above. For example several Contracts will be related to one Project.

There are also several sections with single, unrelated master data lists, for example all Anchor Milestones defined for the Crossrail programme.Fig 1 – Master data model front end interface

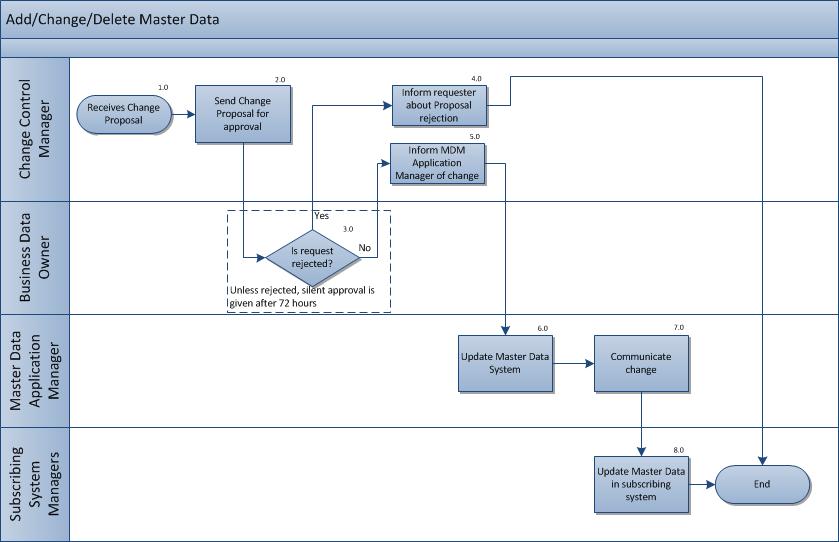

The master data model was then placed under change control, managed by the Programme Controls team. This process ensured all changes were subject to an evaluation before implementation, were approved by appropriate managers; and, were communicated to the owners of all the relevant source systems (fig 2). Details on Crossrail’s change control process can be found in a further paper.

Fig 2 – Master data change control process

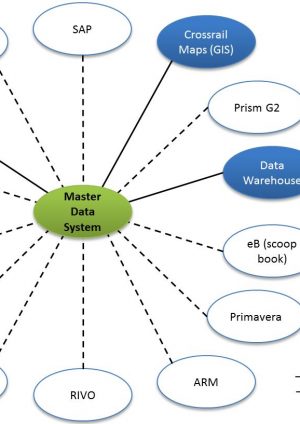

Since Crossrail has a finite end, and all systems a limited lifespan, it was decided that complicated system integrations were to be kept to a minimum. The data warehouse was given automated integration to the master data system, but all other systems had manual integration (fig. 3). This meant the system administrators for each source system had to subscribe to changes in the master data system and make the corresponding changes manually in their systems.

Fig 3 – Master data system integration

Where there was discrepancy between an item of master data held in a source system and that within the master data system, the master data system was regarded as being correct and the source system as non-compliant. A report was produced in the data warehouse to monitor the compliance of master data in the source systems. Any data loaded into the data warehouse where the master data didn’t correspond to the data in the master data system would end up on an ‘unallocated data report’. The master data could then be updated in the source system in preparation for the next load to the data warehouse.

The original master data model only contained the most important elements to manage Crossrail’s periodic reporting in the data warehouse such as the Direct and Indirect breakdown of the Programme and the reporting periods defined by Transport for London. Since then more elements have been added, such as Office Location and Department, and the model has had to be changed due to organisational changes. More subscribing systems have also been added and some in-house systems have been given an automated integration. Changes like this are to be expected during the life of a large scale project but they must be managed and all changes reflected in all affected systems.

The master data system ensures that all master data and the WBS, CBS and OBS are only changed under control. Changes are implemented in the master data system which then provides the single source of the approved master data, WBS, OBS and CBS which is reflected in the Programme’s control and reporting systems.Lessons Learned

A master data model, even if it’s only for a few important elements, should be introduced and placed under change control as soon as possible. It is easier to introduce proper master data in a source system before too much transactional data has been recorded.

If there is no automatic link between the source system and the master data system to ensure aligned master data, there must be another way of controlling data compliance. Since master data from the master data system as well as the source systems were collated in the data warehouse, this was the obvious place to control data compliance at Crossrail.

An ‘unallocated data report’ was produced in the data warehouse to highlight any records with incorrect master data.

Since new master data had to be approved by many different users in the organisation, a silent approval was introduced. If no one objected to a master data change in 72 hours the change was automatically approved (see Fig. 2 for the master data change control process).

Recommendations for Future Projects

Agree a master data model and master data as early as possible in the project. It’s complicated and time consuming to clean and harmonise the master data in all systems when they have been in production for a while.

Clearly identify who is the owner of each master data element. Ensure they understand the importance of the master data and their responsibilities.

Make sure to remove any legacy documents or sources when a master data system has been introduced.

Allow system owners to ‘subscribe’ to the master data elements they are interested in so they won’t get information overload related to master data elements not used in their system. -

Authors

Pontus Osmar Palmgren BSc - Crossrail Ltd

Pontus is the Principal Business Analyst at Crossrail. Pontus’ team has played a key role in the implementation of a range of important business systems to support the delivery of a world class railway. The team has been working on business change projects, in-house software development and third-party application implementations.

Over the past 13 years Pontus has worked in different IT organisations as Business Analyst, Project Manager and System Owner in the UK, Sweden, the United States and Ireland. He holds a Bachelor of Science in Informatics and one in Economics.