Health and Safety Performance Index (HSPI )

Document

type: Case Study

Author:

Steve Crofts BSc, CMIOSH

Publication

Date: 27/09/2016

-

Abstract

The Health and Safety Performance Index (HSPI) is Crossrail’s leading indicator measurement programme, focussed on Crossrail’s 6 Target Zero Pillars, which are considered the foundation for good health and safety management. These are; Leadership & Behaviour; Design for H&S; Communications; Workplace Health; Workplace Safety and Performance Improvement.

The HSPI score is made up of two measures in each of the 6 Target Zero pillars; the periodic Leading Indicator Performance score (measuring ‘effort’ in terms of inputs/ activities) and the latest Gateway score (measuring ‘effectiveness’ as an output of their activities).

This case study outlines the various phases of the HSPI, as it developed as a tool, capturing what worked well and where changes were required.

The HSPI has changed the way contractors look at performance measurement. Although there is still a focus on the end goal – Target Zero – on a period-by-period basis, the focus has shifted to proactive activity. Healthy competition has developed between the different contracts and each has strived to identify opportunities to improve their performance.

This case study is relevant to all major projects or large companies who monitor health and safety performance of their supply chain, a wide number of contracts/contractors, divisions or departments.

-

Read the full document

Introduction and Industry Context

Crossrail uses a combination of leading and lagging indicators to measure safety performance across the Project. Leading indicators are those which focus on the proactive activities and processes, aimed at improving health and safety performance and culture into the future. Lagging Indicators are the more traditionally accepted measures, relating to failures, such as accidents, injury rates, lost time etc; in effect, those that record negative historic outcomes once they have occurred.

The concept of using a balance of leading and lagging indicators is not new in UK industry, but gained some real momentum following the publication of the report of the BP U.S. Refineries Independent Safety Review Panel [1] in 2007, into the BP Texas City Refinery accident that had occurred in 2005, (The Baker Report). The report, recommended that BP create a set of integrated performance indicators, based on both leading and lagging indicators to monitor process safety. This was supported in other contemporary work by the UK HSE.

Following a management review in May 2012, Crossrail agreed to devise a new, ambitious way to measure health and safety that focused on leading indicators. This approach was designed to provide a consistent structure for reporting and to promote a framework to drive health and safety excellence among Crossrail’s contractors. The Health and Safety Performance Index (HSPI) was launched later in 2012 to address this need and to support the existing lagging indicators that were already in use (accident frequency rates).

The HSPI has been in use at Crossrail ever since, receiving annual updates to ensure it remains relevant to the work activities and health and safety risks the programme experiences. The HSPI has been one of the fundamentals of Crossrail’s overall Target Zero approach to Health and Safety improvement.

Target Zero Strategy

In addition to the existing use of lagging indicators to measure the health and safety performance of Crossrail’s contractors, the HSPI was developed and implemented in 2012 to provide a ‘leading indicator’ focused approach. It was launched to coincide with the increase in major construction works on the programme. It provided a mechanism to measure health and safety performance in an innovative way, which both focused on leading indicators and fitted within the wider corporate supplier performance measurement structure (see separate learning legacy on Crossrail’s Performance Assurance Framework). It also utilised contractors existing performance measurement processes wherever possible.

The HSPI was built around Crossrail’s six Target Zero pillars, which represent the areas of focus Crossrail believe are important to ensure progressive management and improving health and safety performance.

Figure 1 – Crossrail’s Target Zero Pillars of Health and Safety

Crossrail had developed an earlier scheme called Gateway, and the HSPI incorporated this existing scheme. Gateway had been developed to evaluate ‘output’ scores and HSPI combined these with a selection of ‘input’ metrics from each of the six pillars to provide an overall the HSPI score every period, for each contract.

Gateway Assessment Scheme

The Gateway Assessment Scheme was originally created as a method to promote excellence in health and safety management and share best practice across the entire Crossrail Contractor community. It was based on a process, developed by Tube Lines Ltd, and later adopted by London Underground, called Beacon. The approach allows individual work sites to gain accreditation for operating to the highest standards of health and safety.

Crossrail incorporated the concept into the six pillars of health and safety and altered the approach from a single assessment that achieved a specified pre-set level, to one where the target level was continually raised over time, based on the ‘best in class’ elements of each pillar, as observed across the programme, on all contractor sites. As a result, all sites on Crossrail required ongoing assessment, to ensure they continued to improve and evolve in line with what others were doing. Initially each contractor received two assessments per year. This reduced to once per year for contracts as they matured and achieved higher scores.

Gateway assessments were undertaken via a site visit. In advance of a visit, contractors compiled their evidence for activities under each pillar, as described in the ‘Gateway Criteria’ . The Gateway site visits included review of this evidence, a site tour / inspection and engagement with site operatives and management to gauge the level of saturation that health and safety activities and initiatives had had.

These site visits were not used as compliance audits against a standard (which are undertaken separately)or the Gateway Criteria; they were instead used to focus on what contractors were doing to ‘raise standards’ beyond mere compliance to deliver world class health and safety. Various other site visits, including some that were used to assess compliance, were undertaken independently from Gateway assessments as part of the overall approach to objectively measure site safety performance. These other visits are not considered in this case study.

Following assessment, each contract was assigned a score as follows;

0 – Failed to achieve

1 – Foundation

2 – Commendation

3 – InspirationWhen the HSPI was created, it was natural that Gateway would form part of it, as it already assessed and scored contractors proactive efforts to exceed standard practice. However contractor performance needed to be measured every four weeks (much more frequently than the twice yearly Gateway Assessments) and required both output and input elements.

To address this, a set of leading indicator metrics, again based on the six pillars of health and safety and which could be collected and reported with sufficient frequency were devised.

HSPI Indicators – Phase 1, 2012

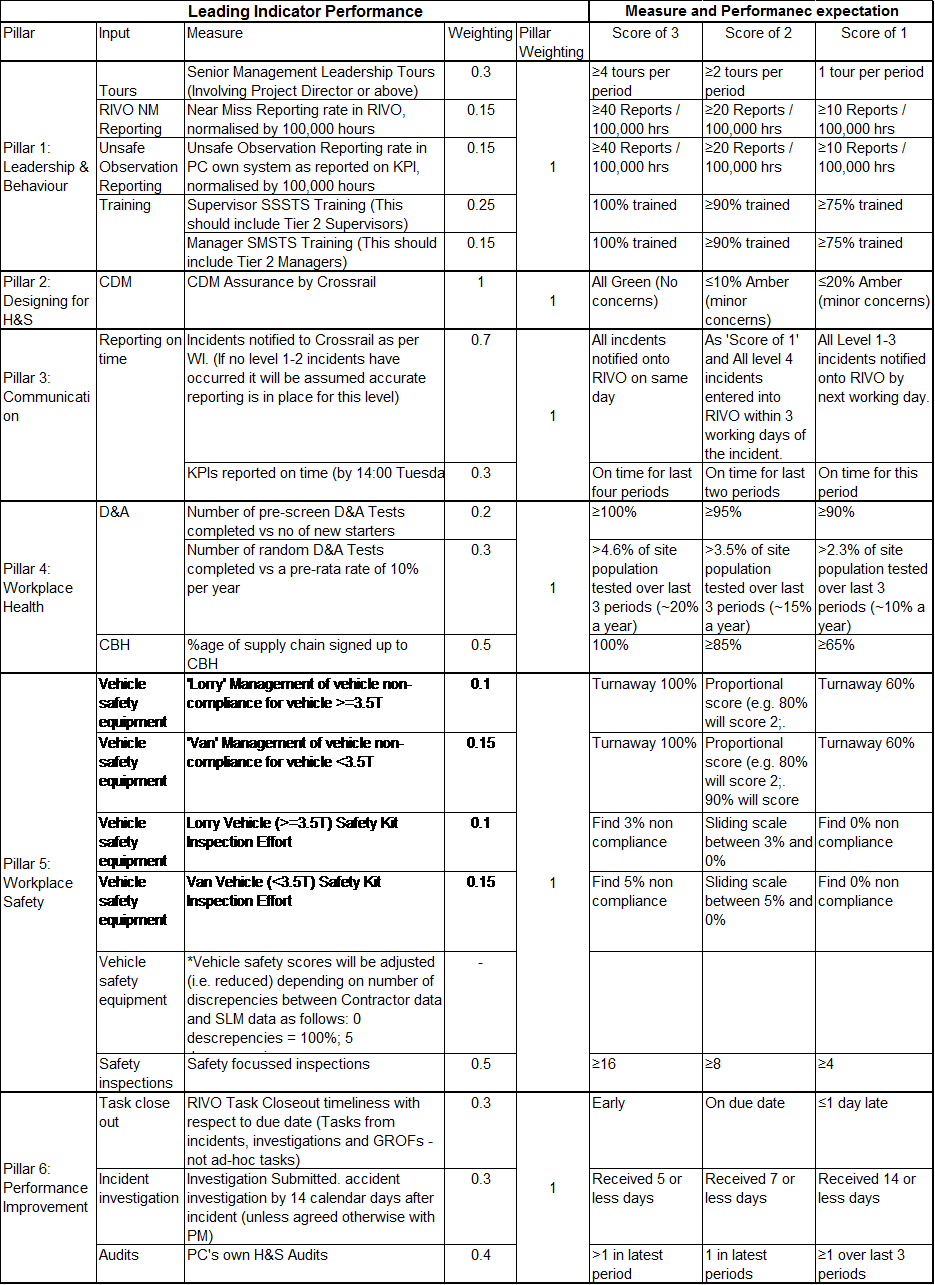

These first set of performance metrics are shown below;

Figure 2 – First set of leading Indicator mandatory metrics in 2012

These metrics were based on the areas Crossrail believed to be most important to bring tangible improvement to health and safety performance. The magnitude of each requirement was set against existing performance data, such that achieving a score of 3 in any criteria would be a significant, but achievable challenge for even the best performing contracts.

HSPI Phase 2, 2014

Following its launch in 2012, the HSPI ran unaltered for over a year, by which point, the maturity and performance of Crossrail contractors meant there was scope to develop the metrics. Revised metrics were agreed and launched in Period 1 (April) of 2014 to enhance the HSPI, ensure it remained relevant and that contractor performance improved. The new metrics were also designed to be more challenging and to ensure they would drive continuous improvement.

These new metrics gave rise to the HSPI 2. Within this revised format, contractors were required to provide their own metrics under each pillar, in addition to those stipulated by Crossrail. The rationale behind this was that the contractors on Crossrail were mature, professional organisations who understood their own health and safety challenges and were therefore best able to set relevant targets. This change also meant targets could be more bespoke to the type of work being undertaken and the scale of the organisation undertaking it.This change was initially well received by Crossrail’s contractors with most agreeing it was the correct approach and a progressive change. It was however reliant on all contractors being equally diligent in setting themselves challenging targets. Whilst Crossrail did review each contractor’s proposed measures, there remained a significant degree of variation in the volume, type and achievability of these measures across the spectrum of contractors.

Consequently, when contractor performance using these measures was compared, many justifiably felt they were being unfairly measured against others i.e. there seemed to be different criteria being used to demonstrate success. As a result of this, formal scores from the HSPI 2 reverted to Crossrail mandatory measures only, excluding contractor proposed measures. Contractors were still monitored on their own measures, but this was done informally and these scores were not recorded as part of their period performance measurement.

HSPI – Phase 3, 2015

The approach of annual review and an update to the performance metrics became entrenched and each subsequent year saw a similar review, an adjustment in the score criteria and re-launch in Period 1. Following the concerns with using contractor set metrics, all subsequent incarnations of the HSPI utilised Crossrail set mandatory measures only. This ensured that all contractors were measured using a common approach and also allowed Crossrail to adjust the measures, dependant on areas of concern, changes in the operation, the effectiveness / impact of existing measures and feedback from contractors. This annual review approach also ensured the HSPI remained challenging and contractor’s efforts to improve did not stagnate.

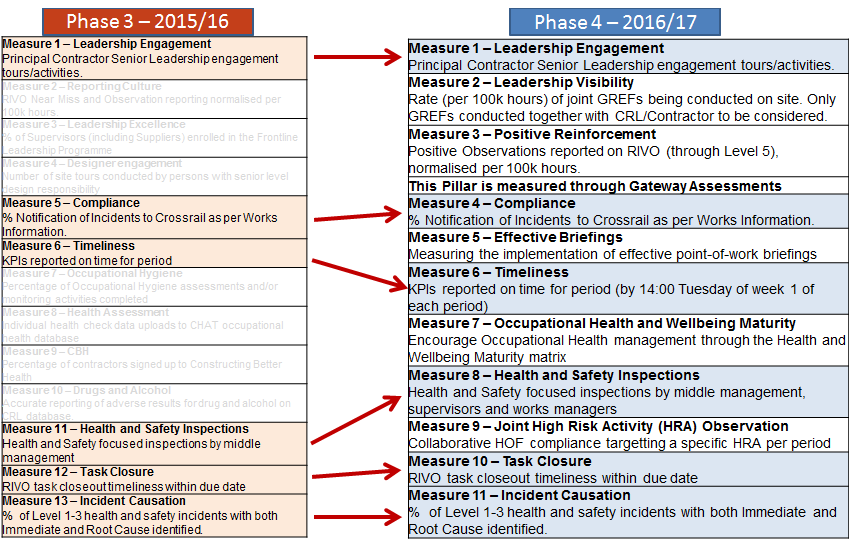

Typically in each annual review, some measures would remain the same but others would be made more challenging (by increasing the volume required), some would be removed and some new ones would be introduced. The diagram below shows how this review process worked when moving from the HSPI phase 3 (2015-16) to the HSPI phase 4 (2016-17);

Figure 3 – Changes to the HSPI metrics from the HSPI 3 to the HSPI 4

Definition of terms used in Figure 3

CBH Constructing Better Health – national scheme for the management of occupational health in the construction industry

CHAT CBH database used to record occupational health data

CRL Crossrail Limited

GREF Golden Rules Engagement Form – record of senior level H&S site tour record

HOF High risk Observation Form – record of risk specific surveillance activity

HRA High Risk Activity – nine specific high risk activities e.g. lifting operations, working at height etc

KPIs Key Performance Indicators

RIVO Database used to capture health and safety informationIt should be noted that as new, more challenging measures were introduced, there would be a subsequent initial downturn in scores across the programme. This fact required communication in advance of the first reporting period of each year, to ensure expectations were managed and reactions to the drop were not disproportionate.

HSPI Phase 4, 2016

With each iteration, the approach to reviewing the measures was enhanced to increase the collaboration and input from contractors and to maximise the feedback from Crossrail site teams. The process of review for the HSPI 4 was initiated five months prior to its launch.

In the example above, it can be seen the number of metrics reduced from the thirteen that were set for HSPI 3 to eleven measures for HSPI 4. Other changes were made for a variety of reasons driven by the behaviours / performance of contractors and the areas Crossrail deemed to be in need of most focus. For example, following a number of serious incidents where the root cause related to communication of method and surrounding hazards, Measure 5 (relating to the effectiveness of briefings) was introduced. This was to encourage greater focus on point of work briefings, to ensure they were happening, that the quality and content were appropriate to the task being undertaken and that they accounted for work being undertaken by others in the immediate vicinity. As this was an entirely new measure, the process for recording and assessing briefings also required implementation before the HSPI 4 could launch.

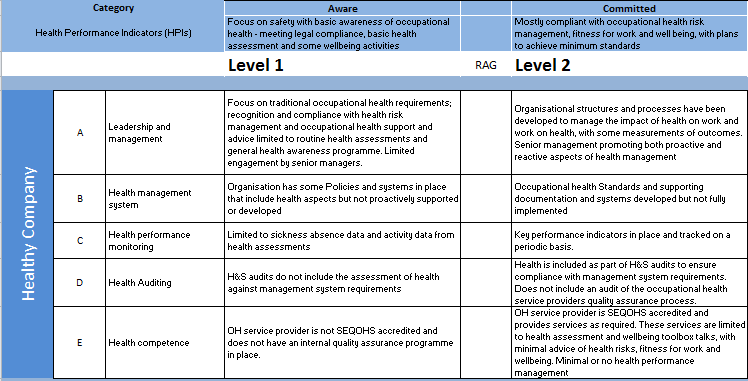

In reaction to slow progress in health and wellbeing management, a new health and wellbeing maturity matrix was developed. This allowed a more detailed review of contractor’s focus in this area, as well as providing them with a framework for developing their own approaches against ‘what good looks like’ The matrix was then included in the HSPI framework. An extract from the matrix is shown below.

Figure 4 – Extract from Health and Wellbeing Maturity MatrixWith the addition of these more sophisticated metrics, the need for early planning was especially important to allow sufficient time to devise and embed the associated processes.

One other significant change in the HSPI 4 was the removal of the ‘Design’ metric. In this version, Design was measured through Gateway only. This further reflected the changing nature of the project as by this point, the majority of final design work had been completed. Whilst there were still design areas to be considered for temporary works, it proved impossible to design a metric that would work effectively given the diverse nature of work activity being undertaken. It was agreed that having a measure for the sake of it did not add value and the metric was therefore dropped.

Throughout the various incarnations of the HSPI metrics, the approach to Gateway remained largely unchanged. Developments were relatively minor and primarily aimed at clarifying requirements for contractors through clearer Gateway Criteria and more opportunity for pre site visit preparation. The frequency with which Gateway assessments were undertaken was reduced from twice per year, to once per year for established contracts. This change also meant that contracts had less opportunity to use Gateway as the medium to improve their overall HSPI score, and had to focus on the other measures.

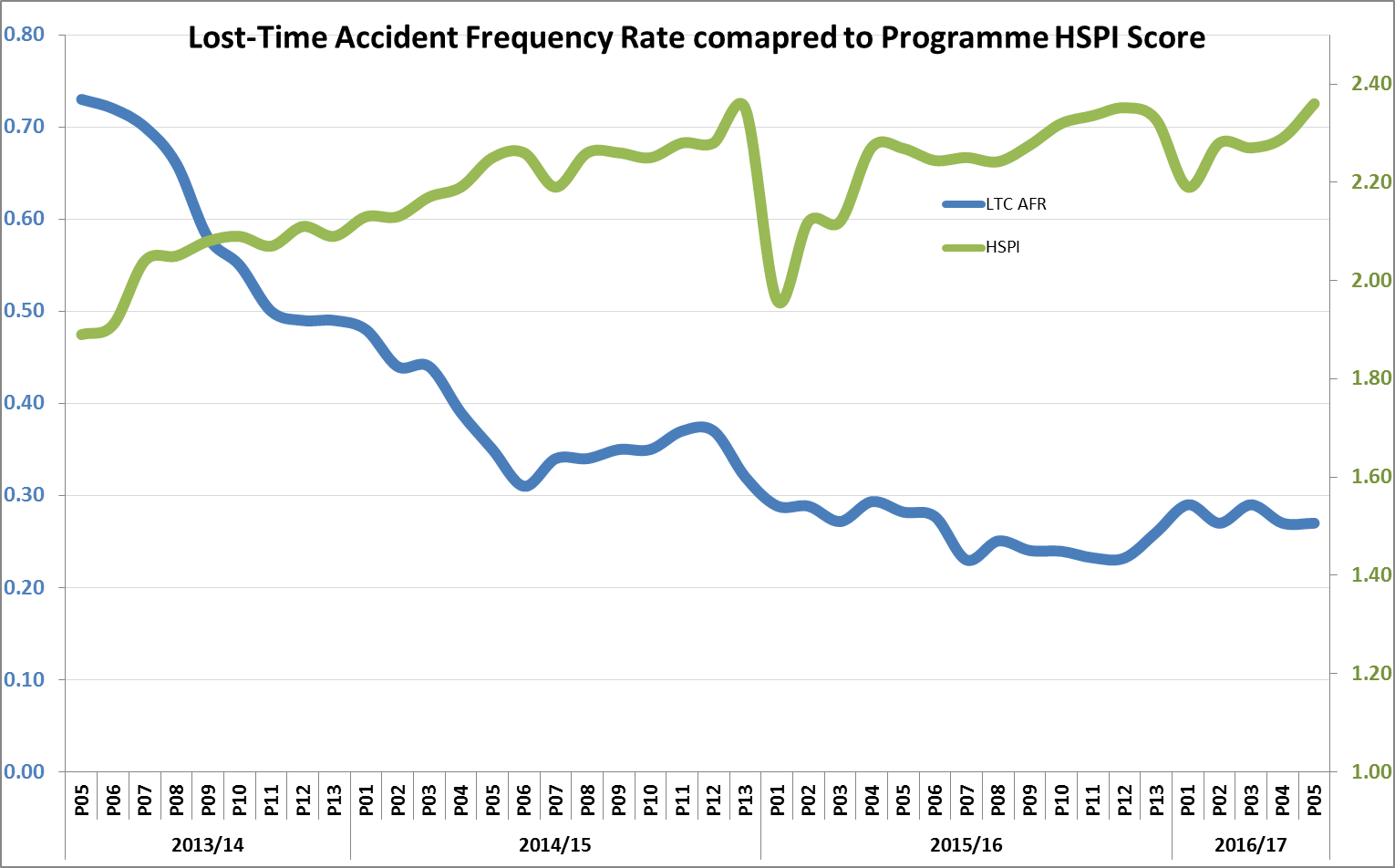

The HSPI has proved to be a successful tool for Crossrail, with increasing HSPI scores seen to correlate with decreasing incident and injury rates. Contracts that have put the most effort into the areas the HSPI, have been those who consistently showed the best health and safety performance.

The process of revising the metrics annually, whilst time consuming and certainly challenging, proved to be an effective method of developing the behaviours of contractors in the areas Crossrail believed to be important. These improved behaviours will be taken away and into the wider industry as work on Crossrail is completed.

Whilst benchmarking directly with other organisations using HSPI is not possible, use of the more traditional lagging indicators have been used to compare performance and Crossrail have performed favourably.

The approach has been widely shared across industry and a number of other large infrastructure projects are planning on implementing similar approaches.

Lessons Learned

- Devising consistent metrics – creating metrics that worked for all types of activities was challenging, requiring significant consultation with contractors / client teams.

- Adequate planning – early planning is required to ensure metrics work prior to launch. Where possible, a period of ‘dry running’ is recommended.

- Setting own measures – whilst the idea for contracts setting their own metrics had merit, and was aligned with a general philosophy of making maximum use of the contractors existing system, in practice, when they are to be compared with each other, it does not work. Metrics must be consistent for all so they are measured against a common standard.

- Normalisation – some metrics, particularly those relating to inspections and engagement, were normalised using ‘hours worked’ to provide the common scale. This was found to be the fairest way to account for the variation in size of contracts measured using the HSPI.

- Frequency of review – the low frequency of Gateway assessment meant it was vital contracts made the most of their assessments and maximised their opportunity to progress. Not all contracts performed as well as they would have wanted in their assessment and were then left waiting for a long period of time before their next opportunity for assessment. Whilst they invariable ensured they did not underperform again, HSPI scores were initially more challenging than they may have needed to be for them.

- Don’t measure for the sake of measuring – the ‘Design’ and ‘Health’ pillars proved to be the most difficult to identify suitable metrics for. The advent of the Health and Wellbeing matrix solved this issue for the ‘Health’ pillar but Design remained a challenge and the metric was dropped in the HSPI 4 by which time it had become less relevant.

- Communicating change – with the introduction of new measures each year, there was a dip in score for each contract and across the programme. Whilst this was expected by those devising the metrics, it was not always as clear as it might have been to leaders across Crossrail, resulting in unnecessary reaction to the apparent ‘drop in performance’. There are lessons here for communications.

- Influencing behaviour – the HSPI proved to be effective at directing contractors behaviour, initiatives and focus towards areas Crossrail identified as important e.g. Point of work briefings, collaborative inspections and a focus on incident investigation and close out.

- Managing expectations – achieving a score of 3 in the HSPI was intended to be challenging. Contractors often pushed back that the challenge was too great and that the top score was unachievable. There was a need therefore to frequently remind all involved that the measures were the same for all and the intention was not for everyone to score 3 on everything straight away, but instead was an incentive to push them to improve.

- Understanding the scale – each period saw a huge amount of data collecting, processing and reviewing. Changing the data collected each year meant the process used to collect it required similar review (in advance). The time and cost of making the required changes to the data collection process each year needs to be factored into any review.

Recommendations for Future Projects

- Ensure early consultation and planning prior to any review of metrics. Crossrail would aim to start the process at least 4 months prior to planned launch.

- If contractors are to set their own measures, exclude these from any comparison ‘league tables’.

- Do not create a metric for the sake of having a metric.

- Ensure data collection processes are adequate prior to attempting to implement a similar index.

- Prior to a change in metrics that will see a dip in performance, communicate with effected stakeholders extensively to ensure expectations are managed.

- Review the effectiveness and appropriateness of metrics regularly to ensure they remain relevant and challenging and ensure those metrics which lose their relevance are phased out.

- Ensure the process for undertaking any required assessments is well communicated and understood and is resilient to staff and organisational change to avoid delays.

- Consider creating an assessment process that can be applied more frequently than Gateway to allow greater opportunity for contractors to improve their scores via their outputs.

Conclusion

The HSPI fulfilled the requirement to provide Crossrail with a structured reporting framework that drove health and safety excellence among Crossrail’s contractors. It was leading indicator focused and consistent. This measure was used to balance against the existing lagging indicators that were already in use and provided the Crossrail leadership team with a mixed, comprehensive suite of leading and lagging health and safety metrics.

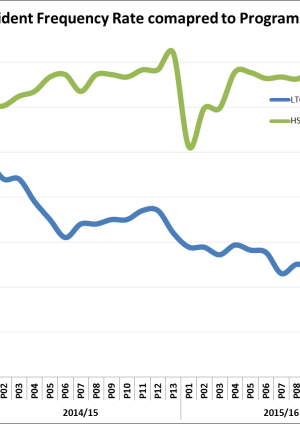

It formed part of the overall Target Zero strategy to improve health and safety performance. Health and safety performance showed steady improvement over the course of the programme supporting the strategy adopted. Whilst a causal link with specific initiatives and approaches taken cannot be proven definitively, Crossrail firmly believe the Target Zero Strategy, including the use of the HSPI was a significant factor in delivering this improvement. The graph below showing the lost time accident rate compared to HSPI illustrates this parallel improvement.

Figure 5 – Graph of lost-time accident frequency rate compared to HSPI over three years.

Positive performance in HSPI was seen to occur in contracts that also showed similar improvements and high performance in reducing incident and injury rates. This supports the view that the approach taken was effective and that the correct metrics and Gateway criteria were utilised.

Reviewing the metrics annually, so they remain relevant and challenging, helped maintain progress and ensure contractors focus was on the areas where improvement or change was required.

There were challenges in terms of data collection, ensuring metrics were relevant and resourcing Gateway assessments, but the benefits of the approach Crossrail took are apparent. A similar approach to utilise leading indicators to be considered alongside lagging indicators has now been implemented successfully on a number of other large infrastructure projects, demonstrating that this approach has already changed the industry.

References

[1] The Baker Report – The Report of The BP U.S. Refineries Independent Safety Review Panel, (2007). BP Texas City Refinery

-

Authors

Steve Crofts BSc, CMIOSH - Crossrail Ltd

Steve Crofts is the Head of Health and Safety Improvements at Crossrail. He is responsible for programme wide H&S initiatives and communications as well as H&S management systems, performance reporting and Occupational Health and Wellbeing. Steve started his career in the Oil and Gas industry but quickly moved to the Railway sector where he now has over 16 years experience, primarily in and around London Underground. He has been with Crossrail since March of 2014.

-

Acknowledgements

Brendan Steenkamp, Health and Safety Information Manager, Crossrail

Steve Hails, Director of Health and Safety, Crossail

-

Peer Reviewers

Diana Salmon RGN, Bsc(Hons), MSc, CertEd, CFIOSH, CMFOH, MIIRSM, Occupational Hygiene, Health and Safety Services (OH2S2)

Joseph Nip MSc, CMIOSH